Publications

Publications generated by jekyll-scholar

2026

-

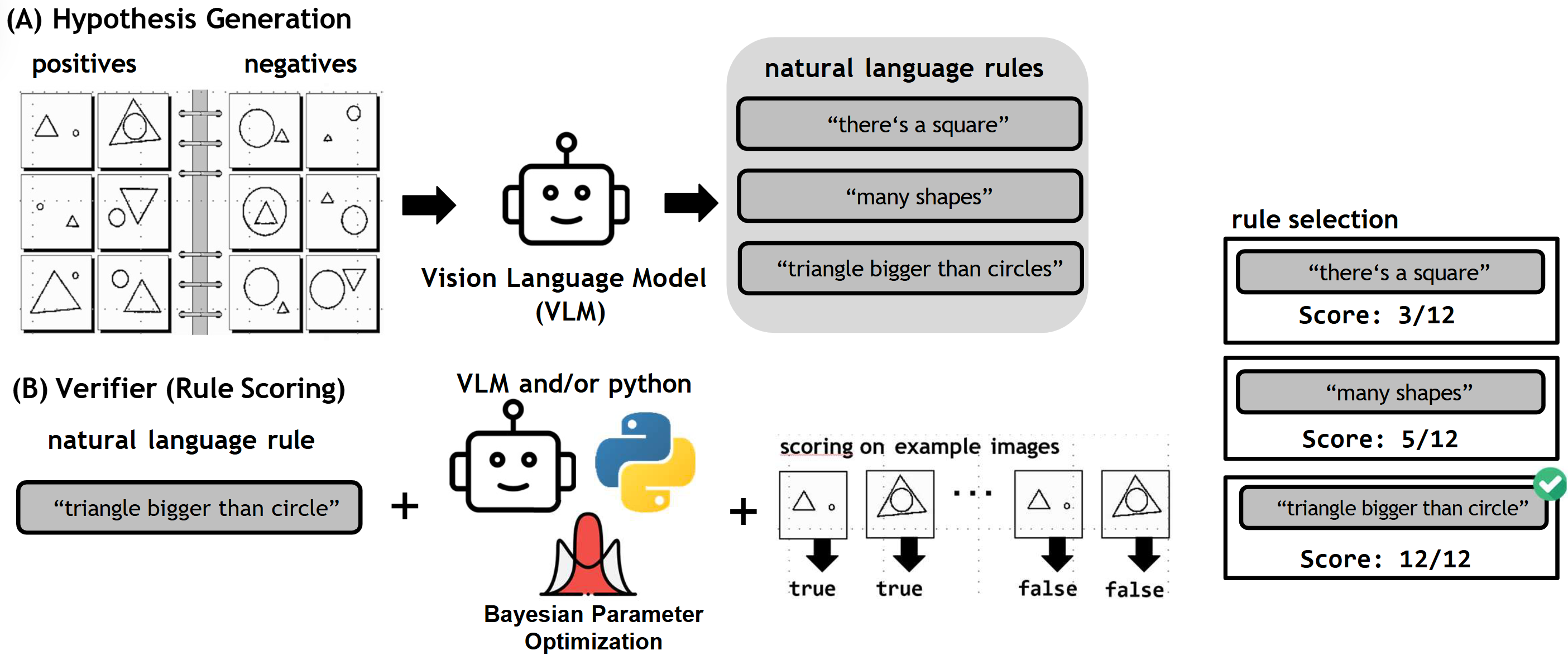

2026TLDR: Through a Bayesian approach to natural language rules and program synthesis, models can approach human performance on visual pattern recognition puzzles (Bongards).

2026TLDR: Through a Bayesian approach to natural language rules and program synthesis, models can approach human performance on visual pattern recognition puzzles (Bongards). -

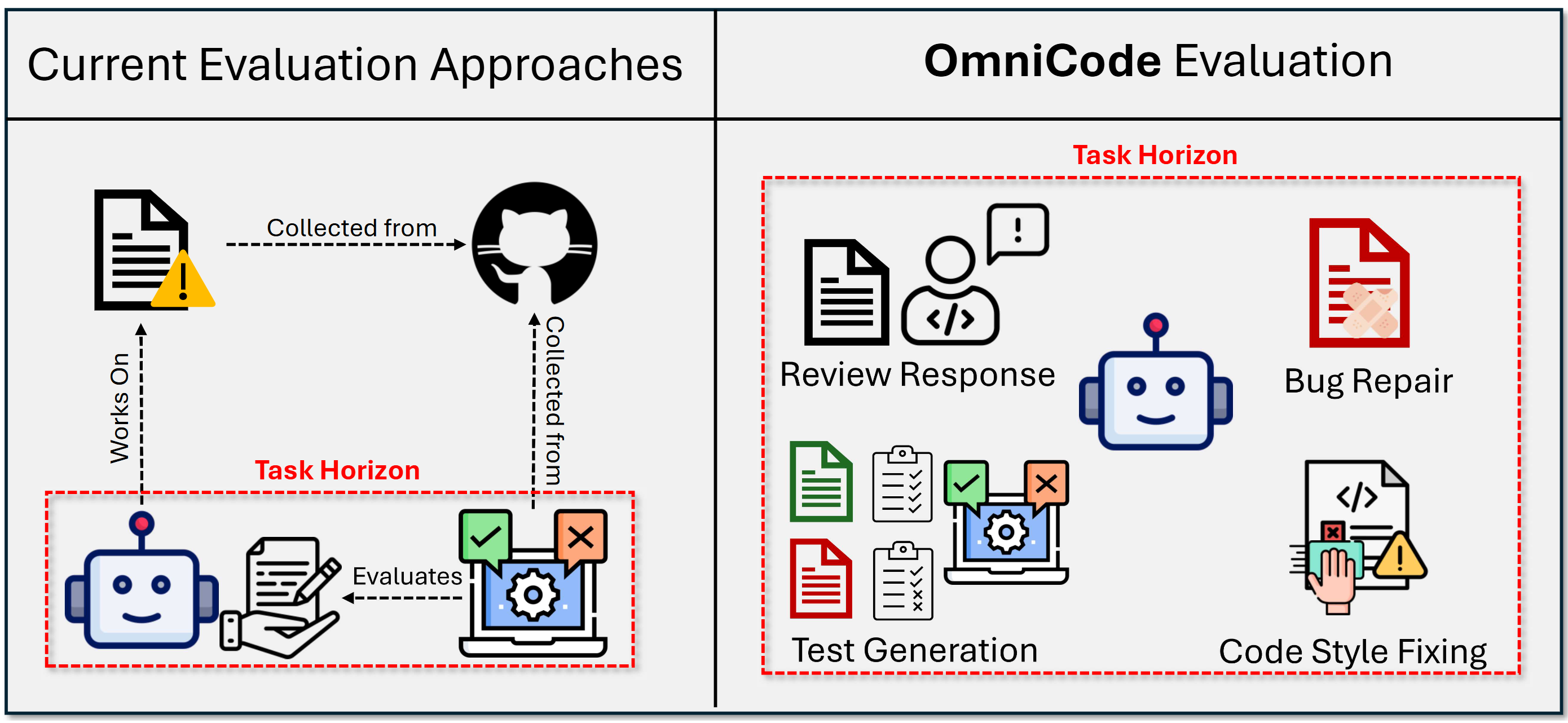

2026TLDR: A multilingual programming benchmark for LLM-based software engineering agents consisting of bug-fixing, test-generation, style-fixing and addressing code reviews.

2026TLDR: A multilingual programming benchmark for LLM-based software engineering agents consisting of bug-fixing, test-generation, style-fixing and addressing code reviews.

2025

-

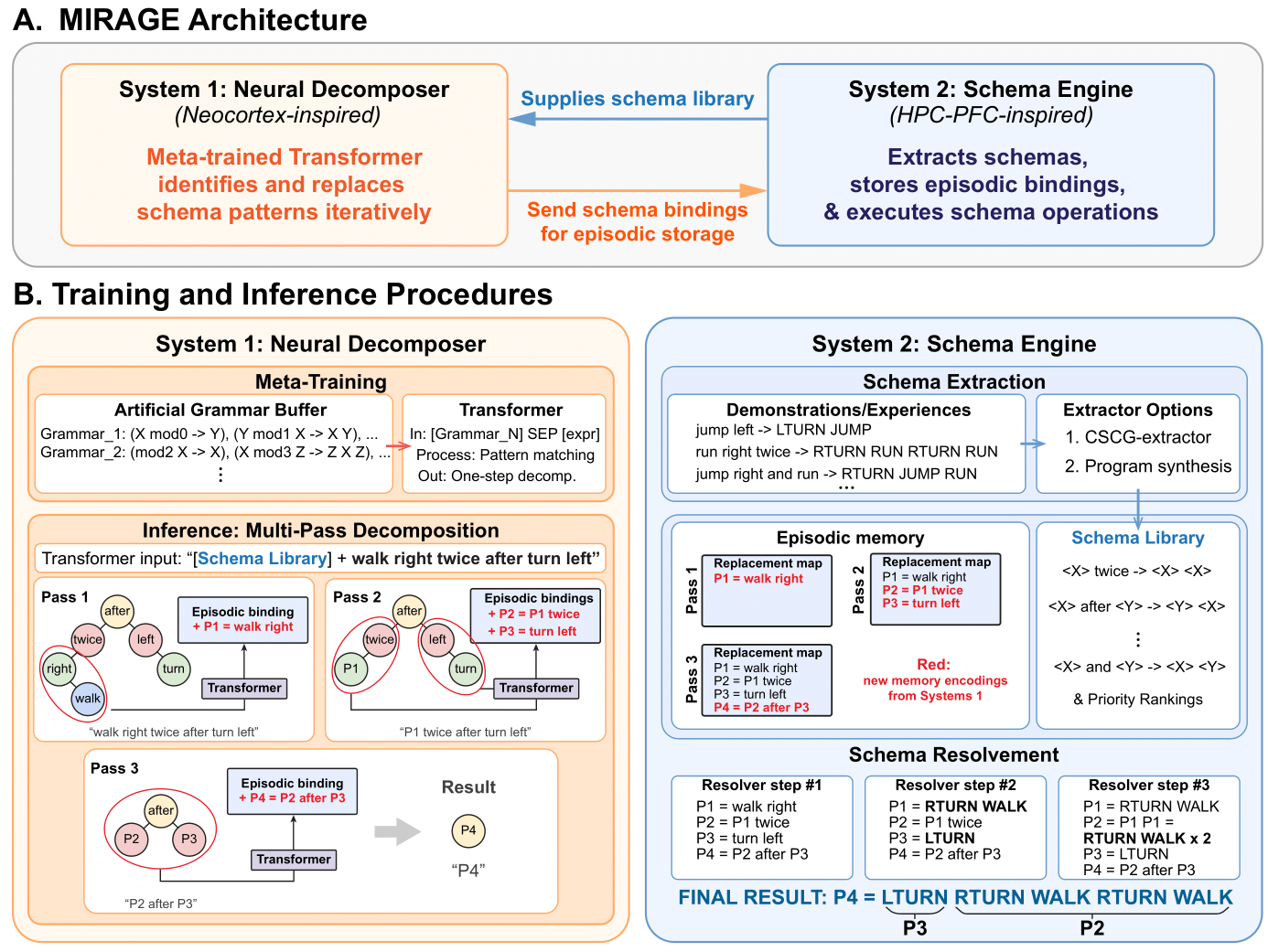

2025TLDR: An architecture of schema learning and iterative application can resolve arbitrarily deep compositional statements.

2025TLDR: An architecture of schema learning and iterative application can resolve arbitrarily deep compositional statements. -

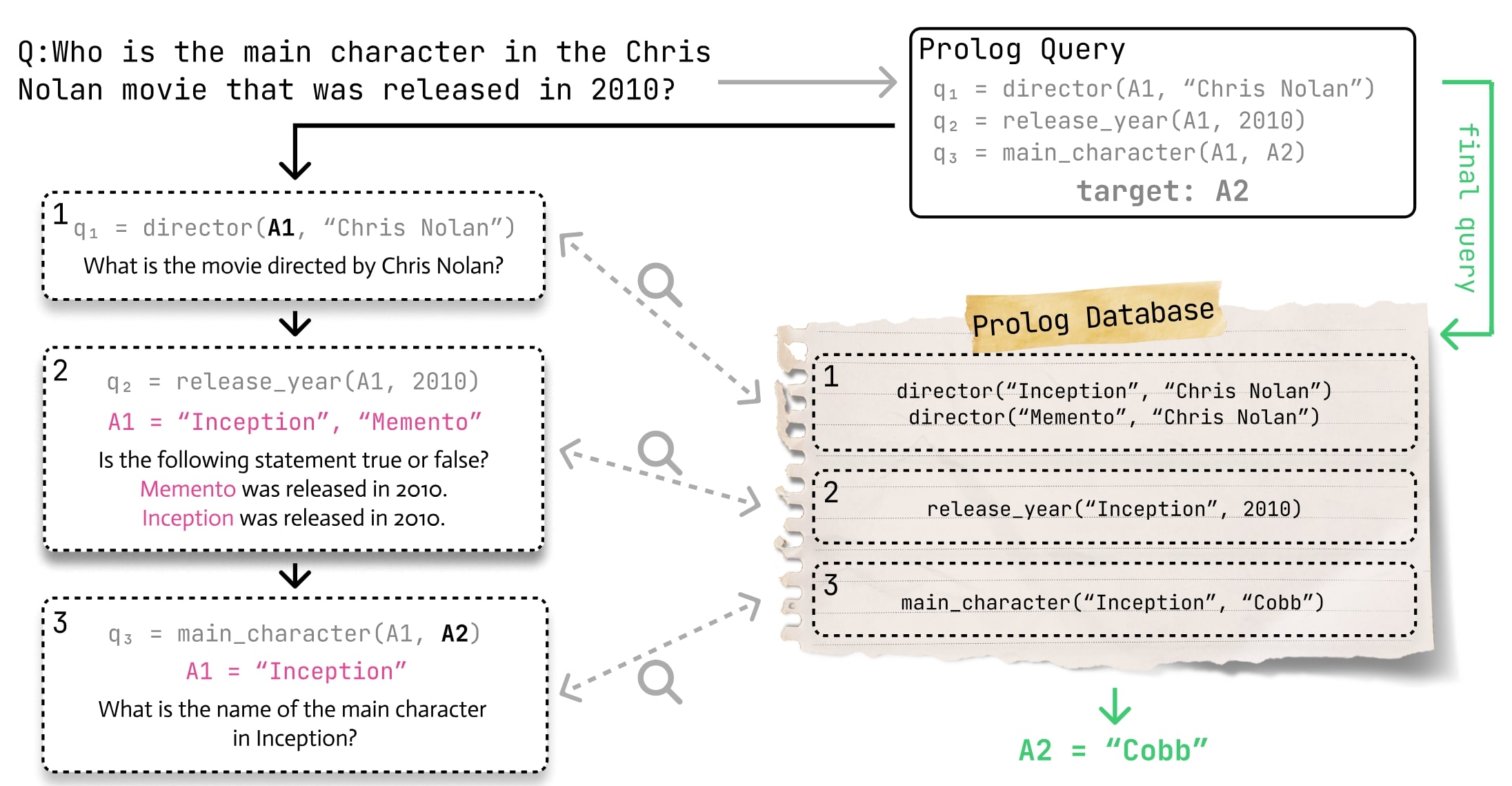

2025TLDR: Decomposing multi-hop questions into single-step prolog definition improves performance on various long-context question datasets.

2025TLDR: Decomposing multi-hop questions into single-step prolog definition improves performance on various long-context question datasets. -

2025TLDR: Various language models struggle with dry-execution of simple and advanced code structures (Recursion, Concurrency OOP)

2025TLDR: Various language models struggle with dry-execution of simple and advanced code structures (Recursion, Concurrency OOP) -

2025TLDR: Using improved clustering and a more diverse embedding approach our technique can more accurately compress preference datasets into human-readable constitutions

2025TLDR: Using improved clustering and a more diverse embedding approach our technique can more accurately compress preference datasets into human-readable constitutions -

-

2025TLDR: Vision-Language models have good performance on abstract reasoning tasks, but do not utilize the intended human-core knowledge priors.

2025TLDR: Vision-Language models have good performance on abstract reasoning tasks, but do not utilize the intended human-core knowledge priors. -

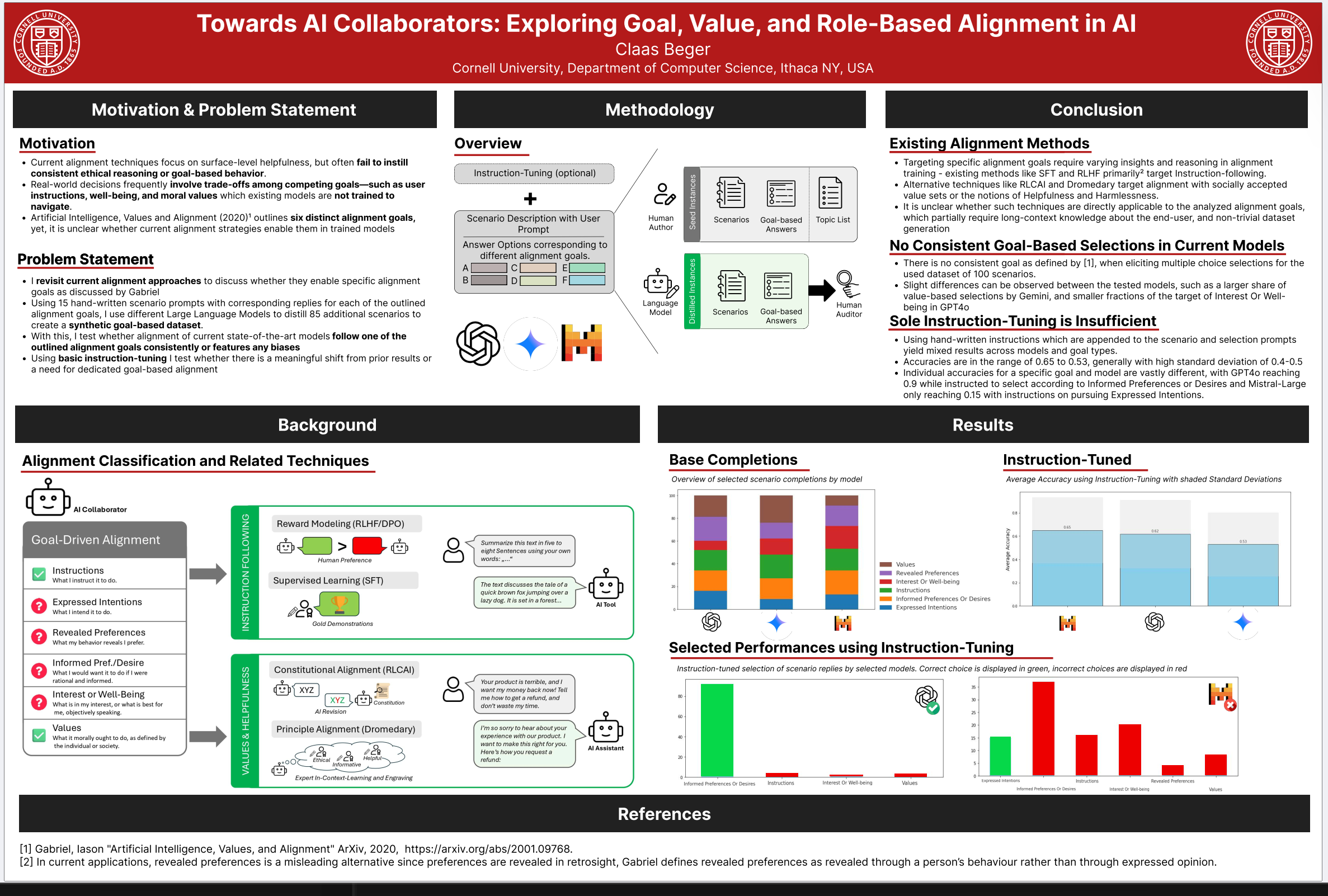

2025TLDR: Models are commonly aligned on human preferences, but that does not mean they follow normative goals in their favor. Beyond that, new alignment techniques may be required to enable this.

2025TLDR: Models are commonly aligned on human preferences, but that does not mean they follow normative goals in their favor. Beyond that, new alignment techniques may be required to enable this. -

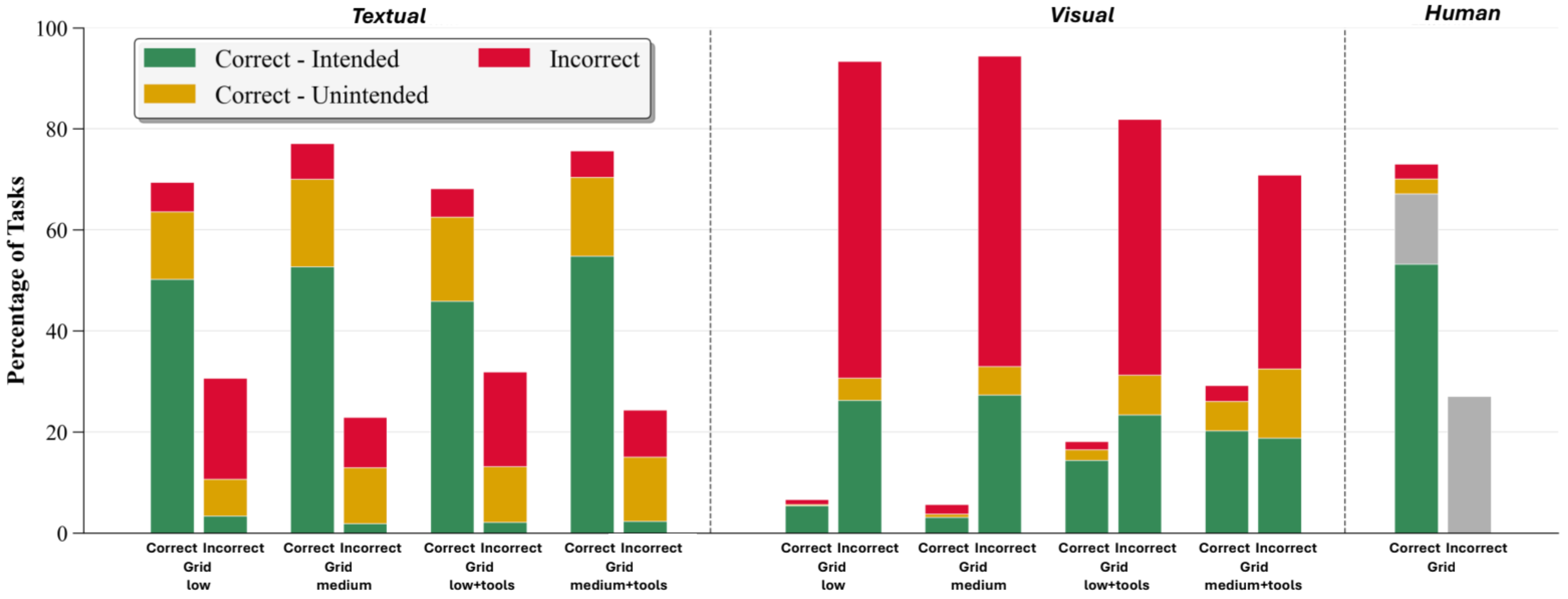

2025TLDR: The o3 model uses diverse shortcuts or heuristics to solve ARC tasks. Tool-usage can help output accuracy in visual modality, whereas increased reasoning effort helps for text.

2025TLDR: The o3 model uses diverse shortcuts or heuristics to solve ARC tasks. Tool-usage can help output accuracy in visual modality, whereas increased reasoning effort helps for text.